Image: Nomad_Soul/Shutterstock

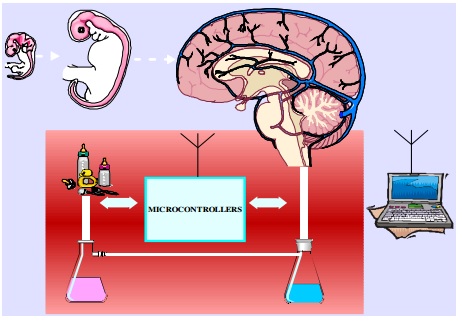

To build a conscious machine, we will need to use lab-grown biological parts. This is the conclusion anyway of a paper posted this week to the arXiv preprint server, courtesy of Stanford neuroscientist Dorian Aur. It suggests an artificial intelligence scheme in which consciousness is generated as the result of a cyborg human brain grown from cellular scratch, e.g. stem cells.At first glance, it looks a bit like the Swiss government-funded Blue Brain Project, a high-profile attempt at creating a human brain (and lesser brains) from the ground up, albeit using supercomputer simulations. Given the right (virtual) ingredients, a brain can figure out how to build itself, the idea goes. Aur argues, however, that too much remains unknown about the exact physiology of consciousness, the blood and guts of it, and we might be better off reprogramming actual human cells in such a way as to allow the brain to progress (relatively) naturally. We might let a brain evolve into being.The idea is dubious as shit—"a wonderful opportunity to exercise scepticism," Antonio Damasio, a neuroscientist at the University of Southern California, offered—but it has a certain allure."Current attempts to replicate the human brain and consciousness on digital computers are not based on a clear assessment of brain complexity," Aur argues. "As recently predicted, these projects have little chance of success." Bad news for the Singularity crowd.For those predictions, Aur cites a skeptical 2012 review of current brain simulation efforts, including Blue Brain, a 2012 paper in Nature on neural timing and-or our lack of knowledge about it, and a 2012 report in Science that concluded, "The mechanisms of perception, cognition, and action remain mysterious because they emerge from the real-time interactions of large sets of neurons in densely interconnected, widespread neural circuits," and several others.The alternative? Good ol' fashioned biology."Our brain is a formidable computing machine," Aur told me. "Bill Gates said: If you invent a breakthrough in artificial intelligence, so machines can learn, that is worth 10 Microsofts' and he is right. Building intelligent—conscious—systems that can talk, move, and solve problems like us will be the goal of many companies in the future. Such machine would be 'laughing of the mechanic aspects of its being.' That's the machine that is worth far more than 10 Microsofts." "A hybrid system can be designed to include an evolving brain equipped with digital computers that maintain homeostasis and provide the right amount of nutrients and oxygen for the brain growth," Aur writes in the paper. "Shaping the structure of the evolving brain will be progressively achieved by controlling spatial organization of various types of cells."Part of the problem, according to Aur, is that electrical signals in the brain are fundamentally different than those found in bare physics or electrical engineering. WIthin the brain, signals are characterized additionally by surrounding biological/molecular structures; signals are influenced by the brain's internal or pre-sensory wave patterns and structural vibrations. These electrical rhythms may be imperceptible to us, but they influence our neurons and synapses, he says.A protein within the brain can thus carry meaningful neurological information without really being part of a signaling pathway or transmitting information synaptically, e.g. by passing electrical signals from a neuron to another cell. It just has to be there.As presented, Aur's idea comes across as rather batty, which is fine, but it also seems to be loaded with a fair amount of conjecture and-or assumption. The possibility of growing a brain from scratch, one that might be digitally integrated, is inferred from research involving the generation of a functional human liver (or liver tissue) from stem cells.The twin assumptions that it will possible to both build such a biocomputer system and that such a system will result in consciousness don't follow from much of anything, unfortunately. On the bright side, this saves us from having to look too closely at the ethics of such an endeavorHis conclusion is optimistic, to say the least:This endeavor will be equivalent to the Moon landing project or the discovery of the Higgs particle. Having included evolutionary relationships, the platform can achieve far more than competing projects (e.g. Blue Brain, Human Brain Project). Since from a nanoscale level almost everything can be manipulated at will, the project will provide a clear alternative to understand how the brain functions from a molecular level. With various types of nanosensors any change of the evolving brain can be well monitored, recorded, and then simulated on digital computers. In the end, however, the suggestion isn't that much more ridiculous than the relentless Singularity (downloadable consciousness, basically) proselytizing of Ray Kurzweil and his legion of fans.The ethics a first glance seem about the same too. That is, what happens if it works? What does one do with a gloppy mixture of carbon nanotube sensors and grey matter that also happens to be aware of itself and the precariousness and novelty of its very existence? Hopefully by then we would have at least learned enough to program the thing to think on the bright side.

"A hybrid system can be designed to include an evolving brain equipped with digital computers that maintain homeostasis and provide the right amount of nutrients and oxygen for the brain growth," Aur writes in the paper. "Shaping the structure of the evolving brain will be progressively achieved by controlling spatial organization of various types of cells."Part of the problem, according to Aur, is that electrical signals in the brain are fundamentally different than those found in bare physics or electrical engineering. WIthin the brain, signals are characterized additionally by surrounding biological/molecular structures; signals are influenced by the brain's internal or pre-sensory wave patterns and structural vibrations. These electrical rhythms may be imperceptible to us, but they influence our neurons and synapses, he says.A protein within the brain can thus carry meaningful neurological information without really being part of a signaling pathway or transmitting information synaptically, e.g. by passing electrical signals from a neuron to another cell. It just has to be there.As presented, Aur's idea comes across as rather batty, which is fine, but it also seems to be loaded with a fair amount of conjecture and-or assumption. The possibility of growing a brain from scratch, one that might be digitally integrated, is inferred from research involving the generation of a functional human liver (or liver tissue) from stem cells.The twin assumptions that it will possible to both build such a biocomputer system and that such a system will result in consciousness don't follow from much of anything, unfortunately. On the bright side, this saves us from having to look too closely at the ethics of such an endeavorHis conclusion is optimistic, to say the least:This endeavor will be equivalent to the Moon landing project or the discovery of the Higgs particle. Having included evolutionary relationships, the platform can achieve far more than competing projects (e.g. Blue Brain, Human Brain Project). Since from a nanoscale level almost everything can be manipulated at will, the project will provide a clear alternative to understand how the brain functions from a molecular level. With various types of nanosensors any change of the evolving brain can be well monitored, recorded, and then simulated on digital computers. In the end, however, the suggestion isn't that much more ridiculous than the relentless Singularity (downloadable consciousness, basically) proselytizing of Ray Kurzweil and his legion of fans.The ethics a first glance seem about the same too. That is, what happens if it works? What does one do with a gloppy mixture of carbon nanotube sensors and grey matter that also happens to be aware of itself and the precariousness and novelty of its very existence? Hopefully by then we would have at least learned enough to program the thing to think on the bright side.

Advertisement

Advertisement

Advertisement