Image: Shutterstock

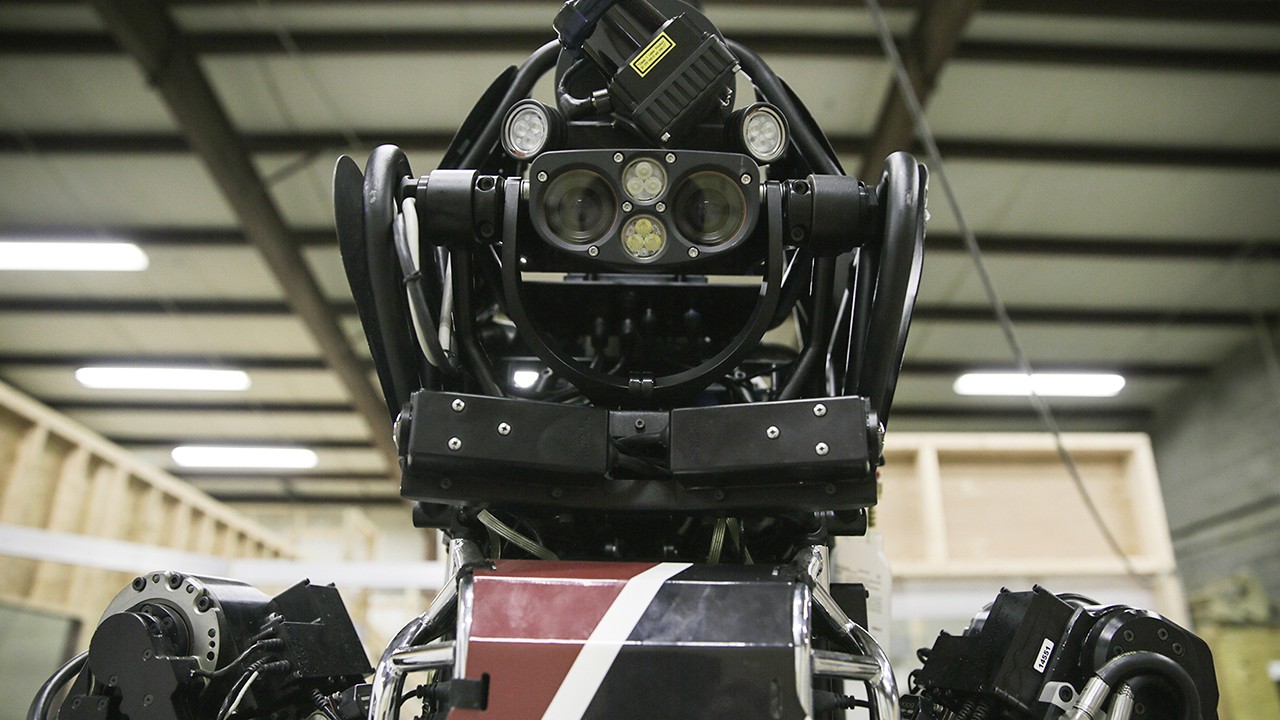

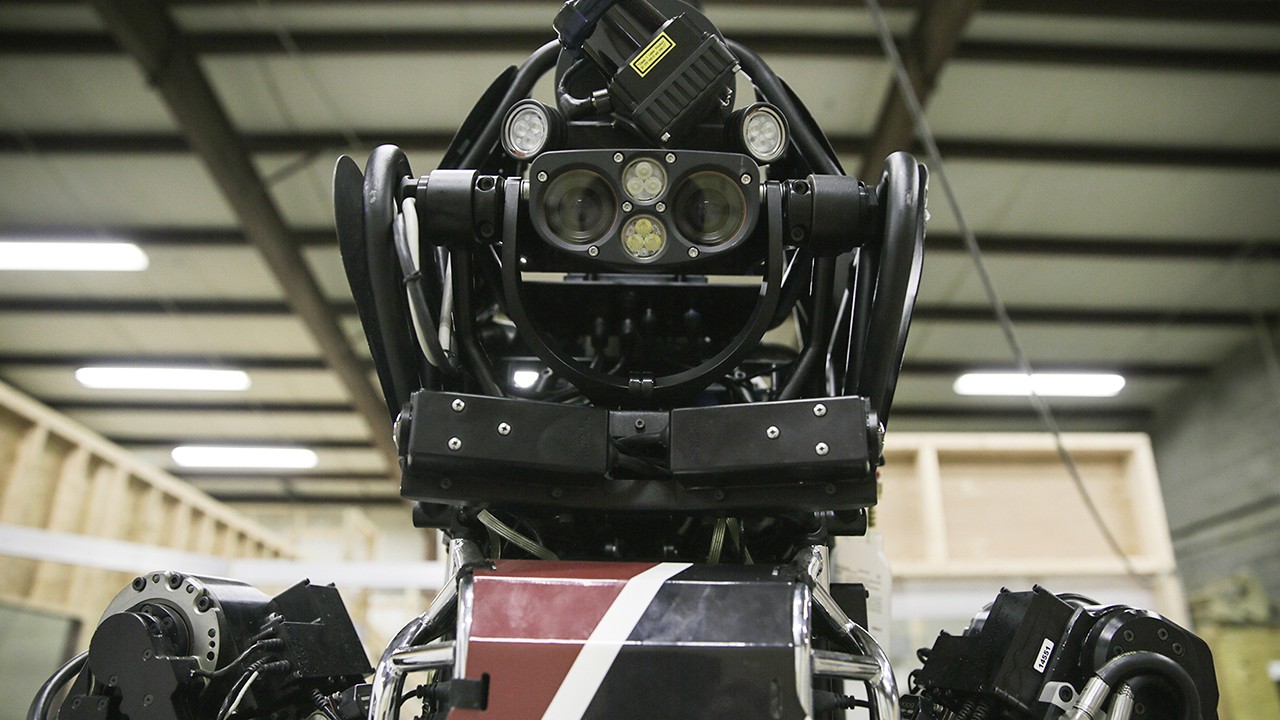

When we think about artificial intelligence, we tend to think of the humanized representations of machine learning like Siri or Alexa, but the truth is that AI is all around us, mostly running as a background process. This slow creep of AI into everything from medicine to finance can be hard to appreciate if for no other reason than it looks a lot different than the AI dreamt up by Hollywood in films like Ex Machina or Her. In fact, most ‘artificial intelligence’ today is quite stupid when compared to a human—a machine learning algorithm might be able to wallop a human at a specific task, such as playing a game of Go, and still struggle at far more mundane tasks like telling a turtle apart from a gun.Nevertheless, a group of 26 leading AI researchers met in Oxford last February to discuss how superhuman artificial intelligence may be deployed for malicious ends in the future. The result of this two-day conference was a sweeping 100-page report published today that delves into the risks posed by AI in the wrong hands, and strategies for mitigating these risks.One of the four high-level recommendations made by the working group was that “researchers and engineers in artificial intelligence should take the dual-use nature of their work seriously, allowing misuse-related considerations to influence research priorities and norms, and proactively reaching out to relevant actors when harmful applications are foreseeable.”This recommendation is particularly relevant in light of the recent rise of “deepfakes,” a machine learning method mostly used to swap Hollywood actresses’ faces onto porn performers’ bodies. As first reported by Motherboard’s Sam Cole, these deep fakes were made possible by adapting an open source machine learning library called TensorFlow, originally developed by Google engineers. Deepfakes underscores the dual use nature of machine learning tools and also raises the question of who should have access to these tools.The other two areas considered to be major risks for malicious AI are digital and physical security. In terms of digital security, the use of AI to carry out cyberattacks will “alleviate the existing tradeoff between the scale and efficiency of the attacks.” This might take the form of AI performing labor intensive cyberattacks like phishing at scale, or more sophisticated forms of attacks such as using speech synthesis to impersonate a victim.In terms of physical AI threats, the researchers looked at the increasing reliance of the physical world on automated systems. As more smart homes and self-driving cars come online, AI could be used to subvert these systems and cause catastrophic damage. Then there’s the threat of purposefully made malicious AI systems, such as autonomous weapons or micro-drone swarms.Some of these scenarios, like plagues of autonomous microdrones, seem pretty far off, while others, such as large scale cyberattacks, autonomous weapons, and video manipulation are already causing problems. In order to combat these threats, and ensure that AI is used to the benefit of humanity, the researchers recommend developing new policy solutions and exploring different “openness models” to mitigate the risks of AI. For example, the researchers suggest that central access licensing models could ensure that AI technologies don’t fall into the wrong hands, or instituting some sort of monitoring program to keep tabs on the use of AI resources. “Current trends emphasize widespread open access to cutting-edge research and development achievements,” the report’s authors write. “If these trends continue for the next 5 years, we expect the ability of attackers to cause harm with digital and robotic systems to significantly increase.”On the other hand, the researchers recognize that the proliferation of open-source AI technologies will also increasingly attract the attention of policy makers and regulators, who will impose more limitations on these technologies. As for the specific form these policies should take, this will have to be hashed out at local, national and international levels.“There remain many disagreements between the co-authors of this report, let alone amongst the various expert communities out in the world,” the report concludes. ”Many of these disagreements will not be resolved until we get more data as the various threats and responses unfold, but this uncertainty and expert disagreement should not paralyze us from taking precautionary action today.”

“Current trends emphasize widespread open access to cutting-edge research and development achievements,” the report’s authors write. “If these trends continue for the next 5 years, we expect the ability of attackers to cause harm with digital and robotic systems to significantly increase.”On the other hand, the researchers recognize that the proliferation of open-source AI technologies will also increasingly attract the attention of policy makers and regulators, who will impose more limitations on these technologies. As for the specific form these policies should take, this will have to be hashed out at local, national and international levels.“There remain many disagreements between the co-authors of this report, let alone amongst the various expert communities out in the world,” the report concludes. ”Many of these disagreements will not be resolved until we get more data as the various threats and responses unfold, but this uncertainty and expert disagreement should not paralyze us from taking precautionary action today.”

Advertisement

Although deepfakes is specifically mentioned in the report, the researchers also highlight the use of similar techniques to manipulate videos of world leaders as a threat to political security, one of the three threat domains considered by the researchers. One need only imagine a fake video of Trump declaring war on North Korea surfacing in Pyongyang and the fallout that would result to understand what is at stake. Moreover, the researchers saw the use of AI to enable unprecedented levels of mass surveillance through data analysis and mass persuasion through targeted propaganda as other areas of political concern.Read More: We Are Truly Fucked: Everyone Is Making AI-Generated Fake Porn Now

Advertisement